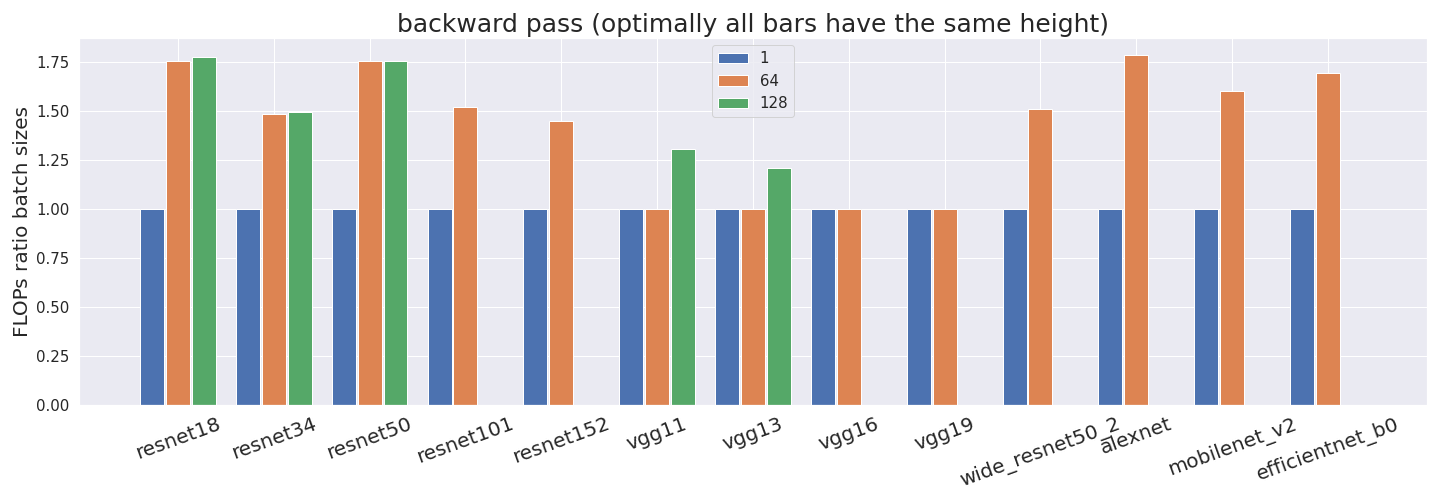

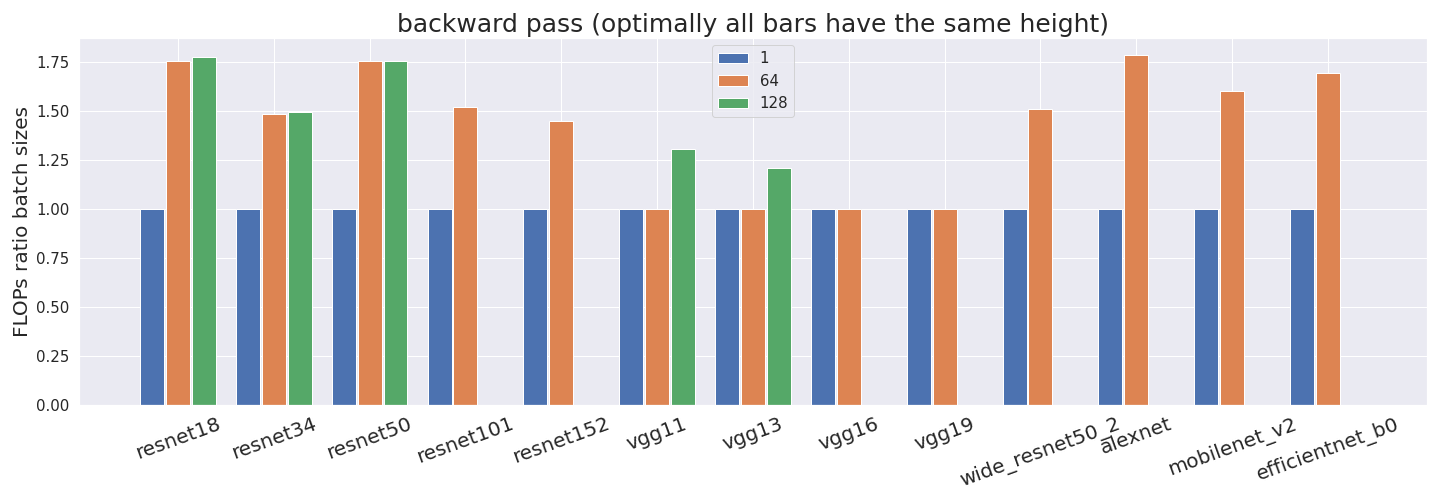

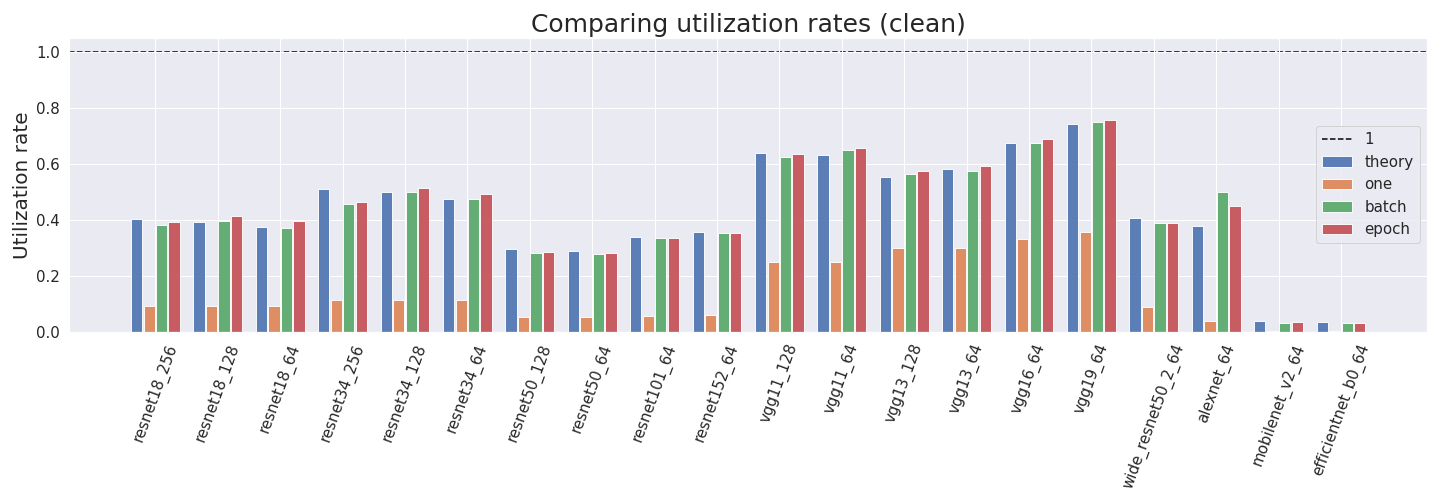

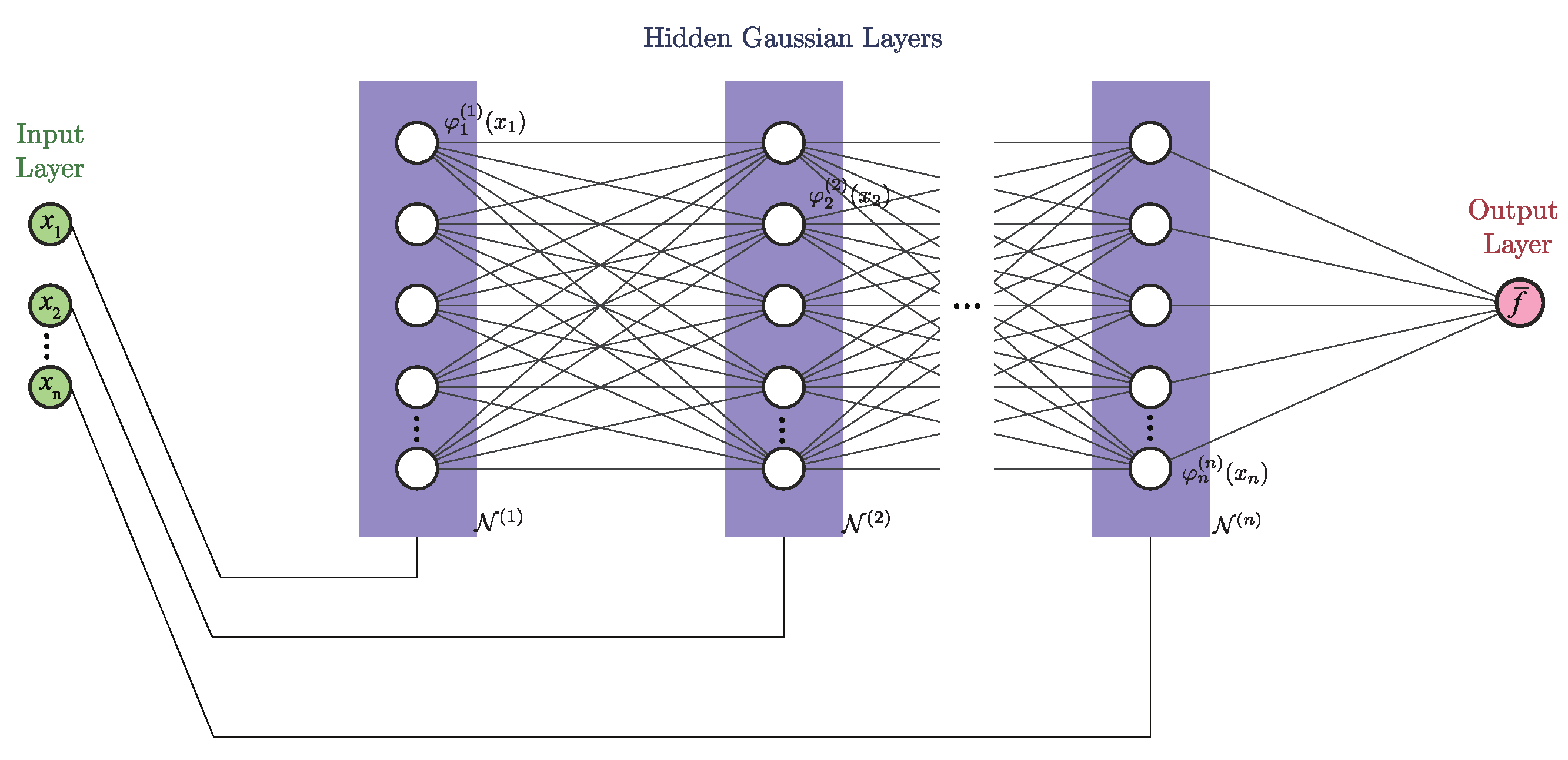

Computing the utilization rate for multiple Neural Network architectures.

Algorithms, Free Full-Text

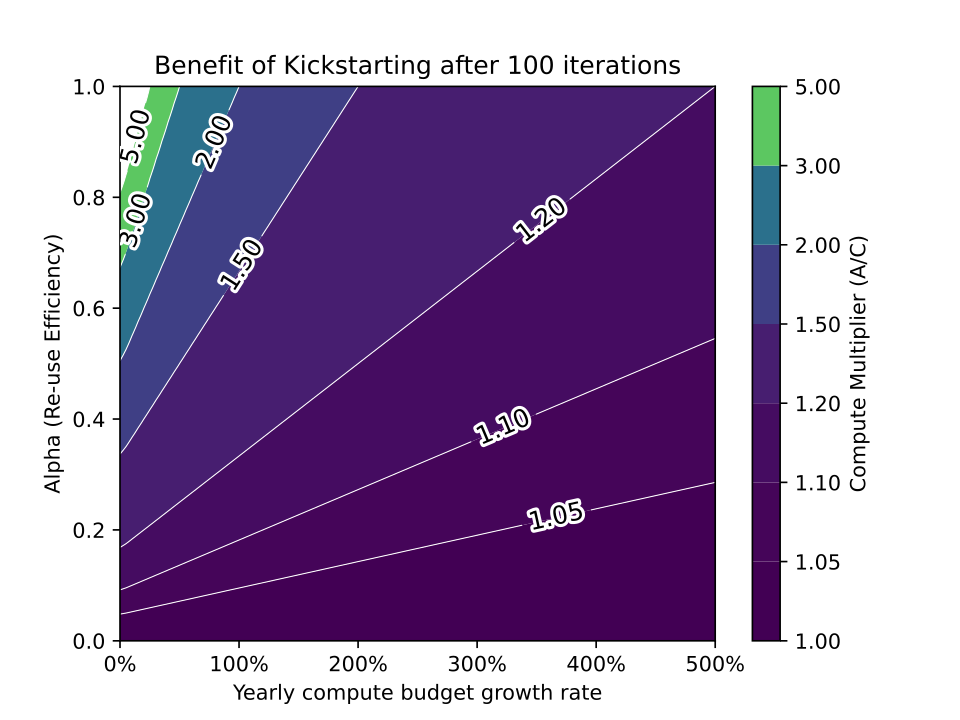

How to Measure FLOP/s for Neural Networks Empirically? – Epoch

NVIDIA Clocks World's Fastest BERT Training Time and Largest Transformer Based Model, Paving Path For Advanced Conversational AI

How to Measure FLOP/s for Neural Networks Empirically? – Epoch

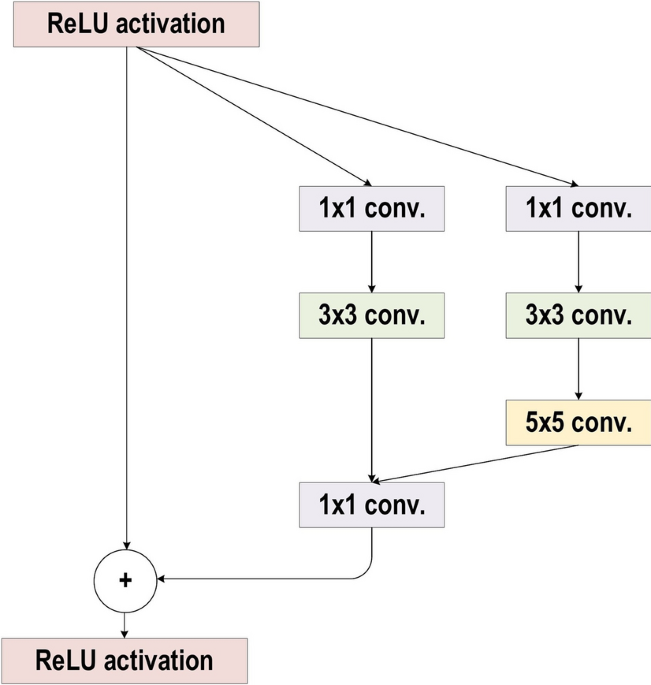

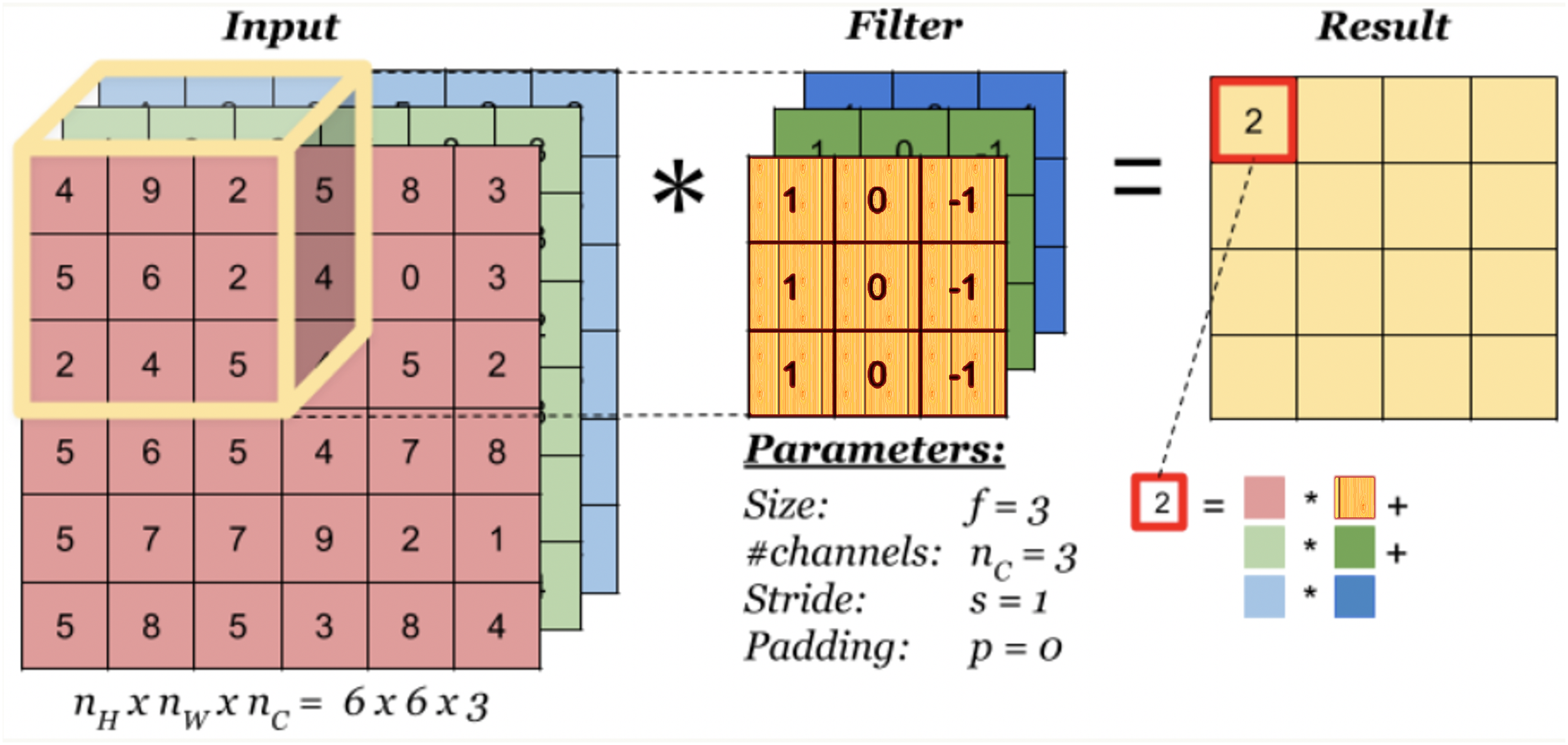

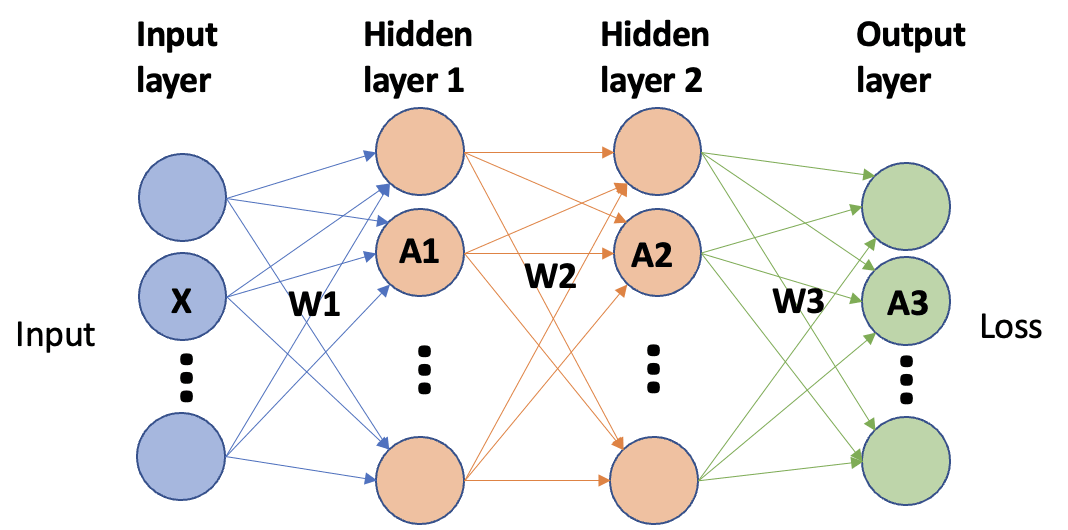

Review of deep learning: concepts, CNN architectures, challenges, applications, future directions, Journal of Big Data

Multi-objective simulated annealing for hyper-parameter optimization in convolutional neural networks [PeerJ]

How to Measure FLOP/s for Neural Networks Empirically? – Epoch

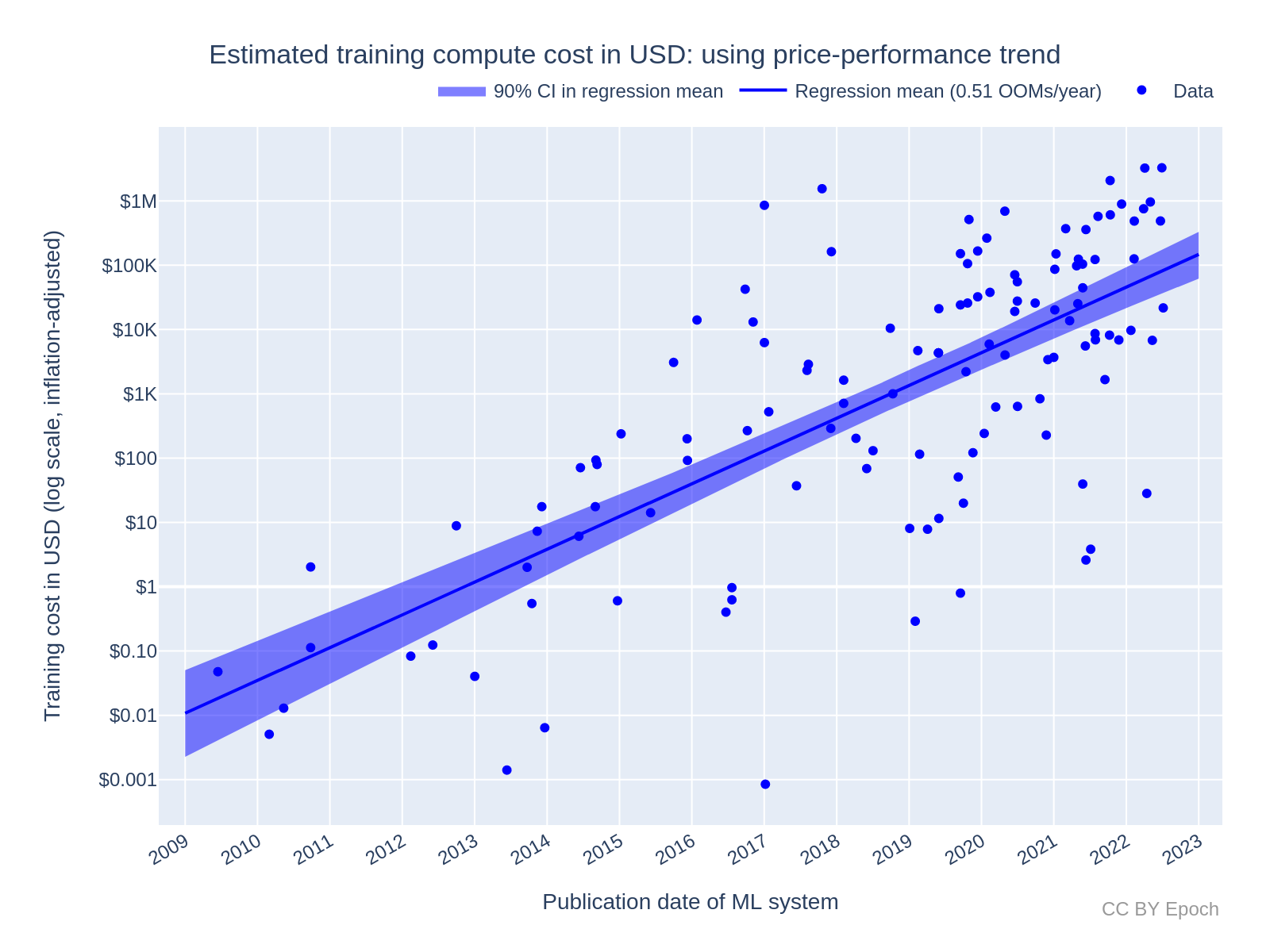

Trends in the Dollar Training Cost of Machine Learning Systems – Epoch

Convolutional neural network-based respiration analysis of electrical activities of the diaphragm

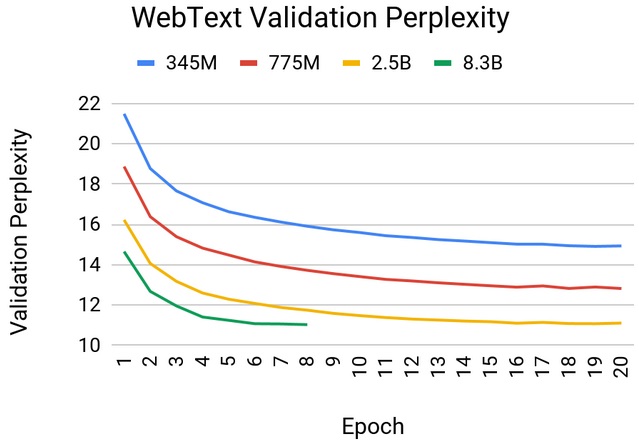

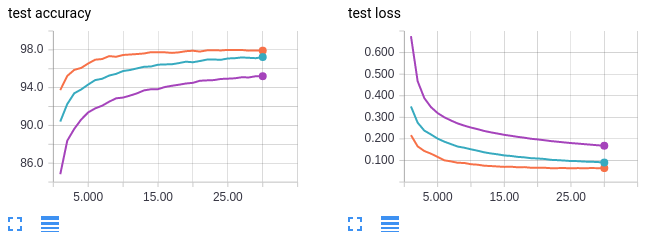

Effect of batch size on training dynamics, by Kevin Shen, Mini Distill

When do Convolutional Neural Networks Stop Learning?

How to calculate the amount of memory needed for a deep network - Quora

Algorithms, Free Full-Text

How to measure FLOP/s for Neural Networks empirically? — LessWrong

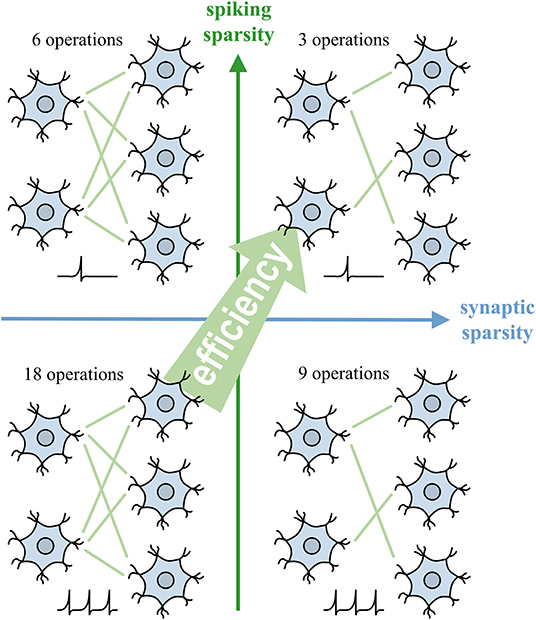

Frontiers Backpropagation With Sparsity Regularization for Spiking Neural Network Learning

/product/03/8313231/1.jpg?8294)