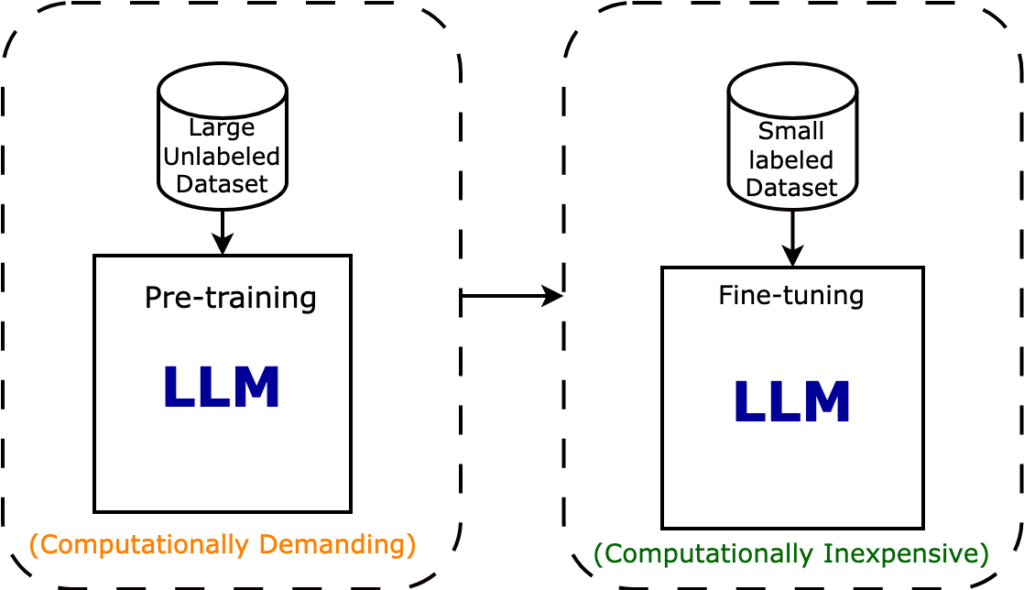

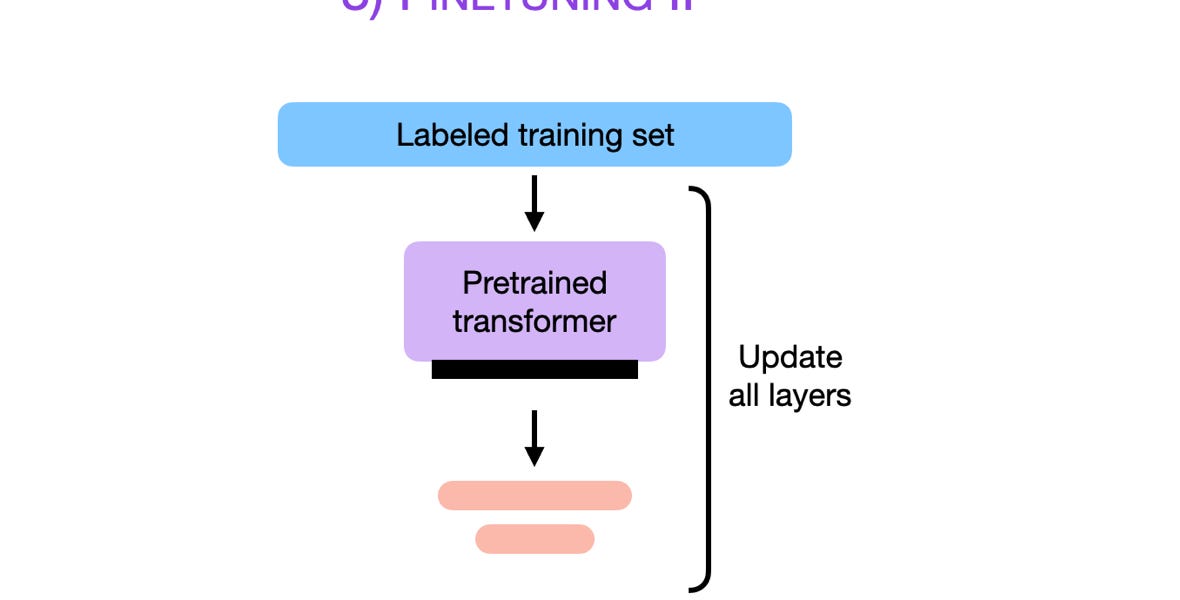

Fine-tuning is the process of adjusting the parameters of a pre-trained large language model to unlock the full potential of LLMs in specific domains or applications.

Mastering Language Models: Fine-Tuning Best Practices for Stellar

Supervised Fine-tuning: customizing LLMs

Fine Tuning Open Source Large Language Models (PEFT QLoRA) on Azure Machine Learning, by Keshav Singh

The complete guide to LLM fine-tuning - TechTalks

Mastering Generative AI Interactions: A Guide to In-Context Learning and Fine-Tuning

LLM Fine-Tuning: What Works and What Doesn't?

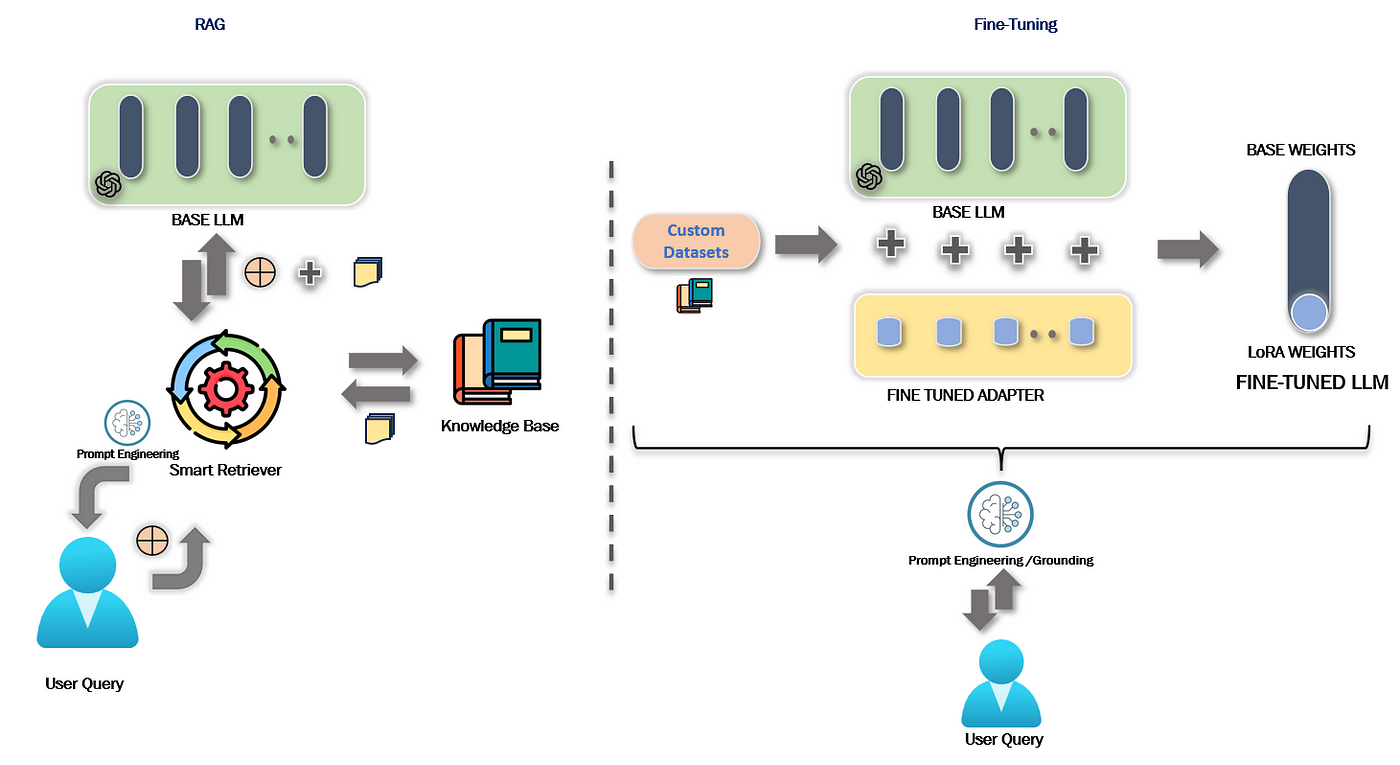

LLMs, RAG, and Fine-Tuning: A Hands-On Guided Tour

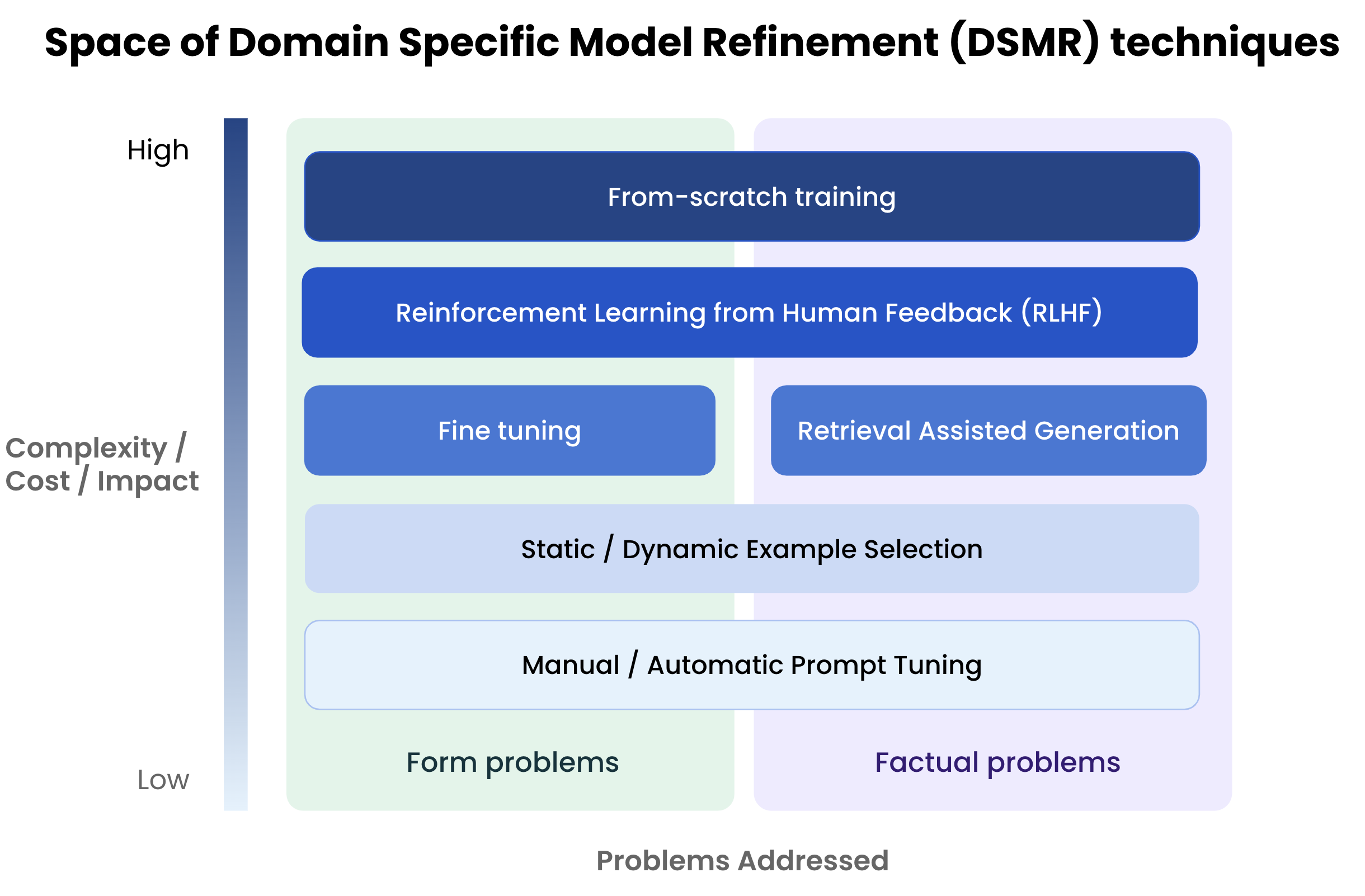

To fine-tune or not to fine-tune., by Michiel De Koninck

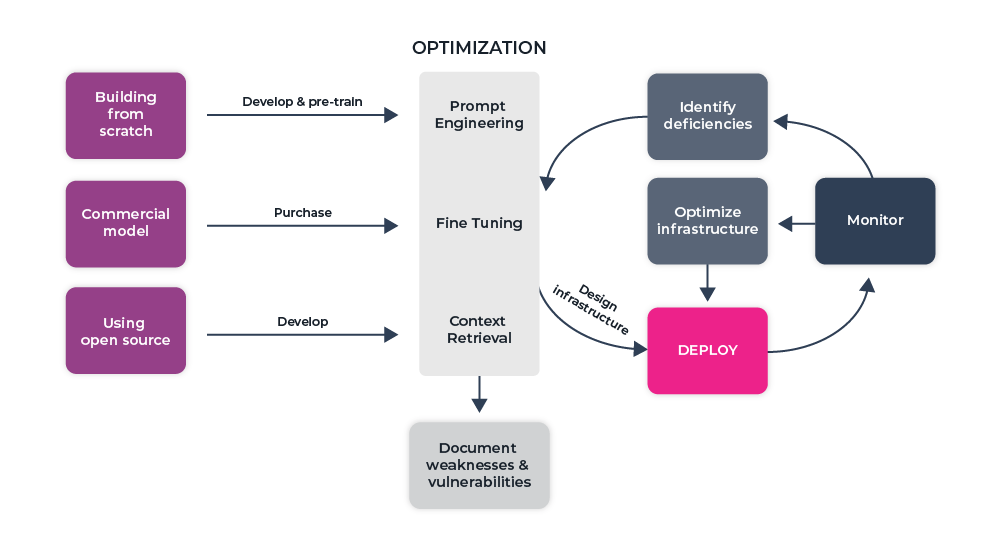

Customizing and fine-tuning LLMs: What you need to know - The

A Beginner's Guide to Fine-Tuning Large Language Models

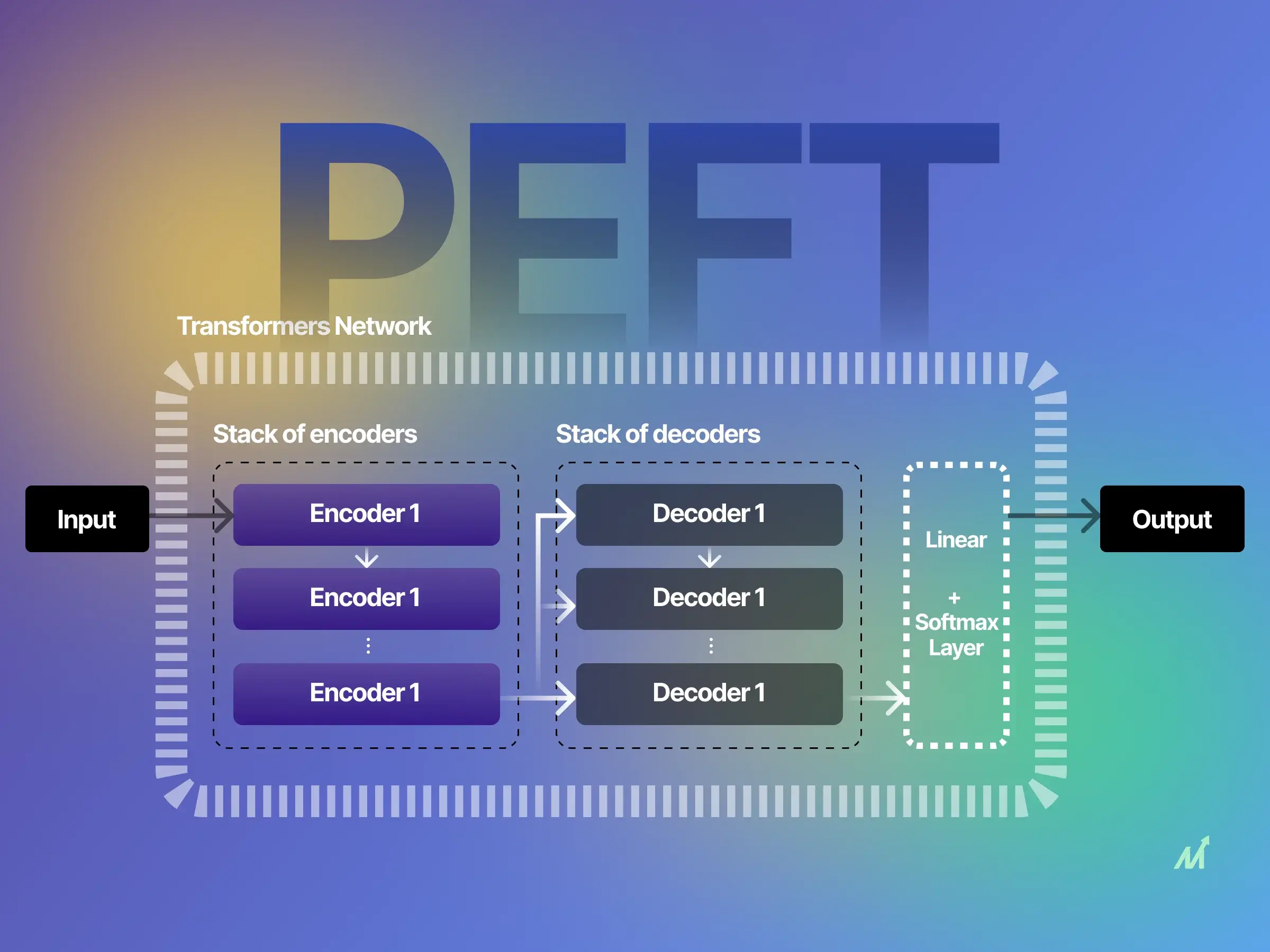

Parameter-Efficient Fine-Tuning (PEFT) of LLMs: A Practical Guide

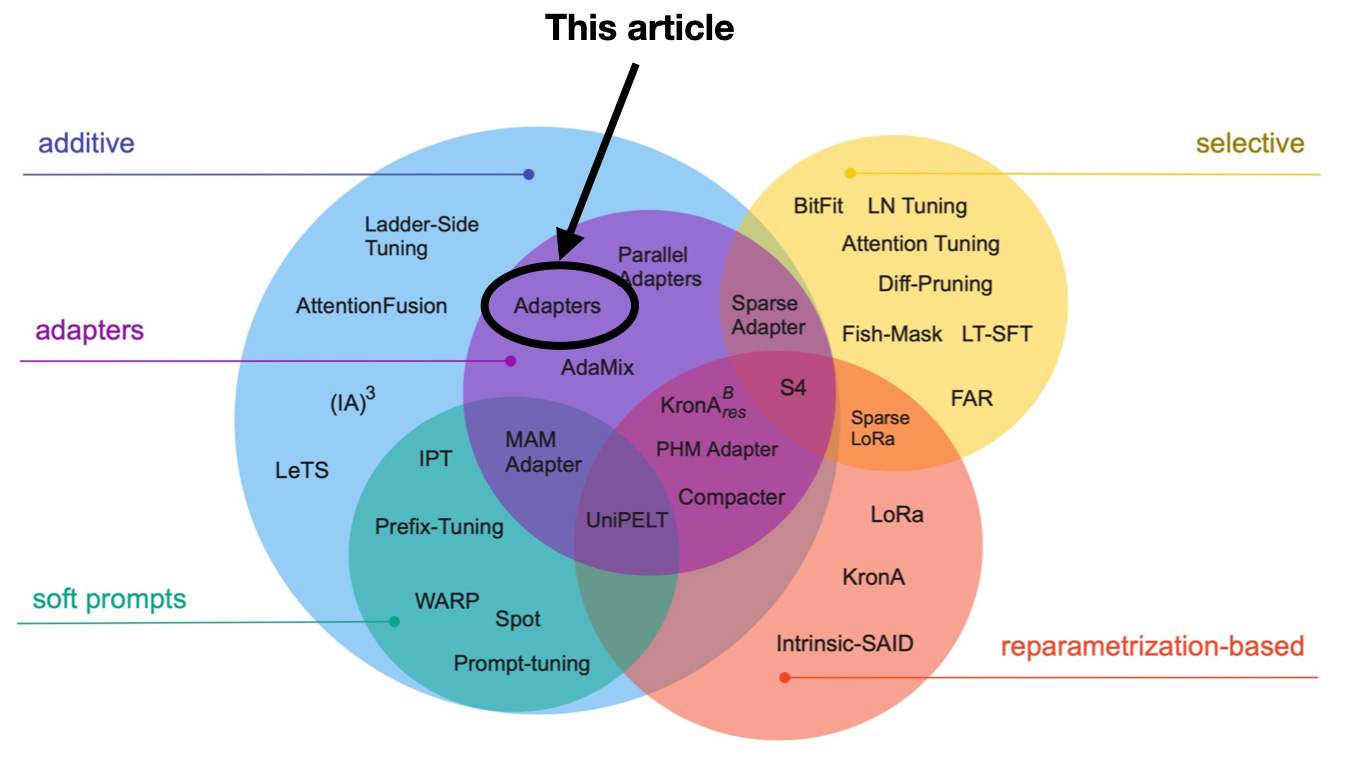

Finetuning LLMs Efficiently with Adapters

Fine-tuning Large Language Models: Complete Optimization Guide

Best Practices for Large Language Model (LLM) Deployment - Arize AI

Finetuning Large Language Models