Together, the developer, claims it is the largest public dataset specifically for language model pre-training

RedPajama training progress at 440 billion tokens

.png?width=700&auto=webp&quality=80&disable=upscale)

ChatGPT / Generative AI recent news, page 3 of 19

Total Licensing Spring 24 by Total Licensing - Issuu

.png?width=700&auto=webp&quality=80&disable=upscale)

ChatGPT / Generative AI recent news, page 5 of 21

RedPajama Reproducing LLaMA🦙 Dataset on 1.2 Trillion Tokens, by Angelina Yang

RedPajama's Giant 30T Token Dataset Shows that Data is the Next Frontier in LLMs

RedPajama, a project to create leading open-source models, starts by reproducing LLaMA training dataset of over 1.2 trillion tokens

.jpg?width=700&auto=webp&quality=80&disable=upscale)

Data science recent news

cerebras/SlimPajama-627B · Datasets at Hugging Face

RedPajama Reproducing LLaMA🦙 Dataset on 1.2 Trillion Tokens, by Angelina Yang

ChatGPT / Generative AI recent news, page 3 of 19

RedPajama Reproducing LLaMA🦙 Dataset on 1.2 Trillion Tokens, by Angelina Yang

Language models recent news, page 7 of 25

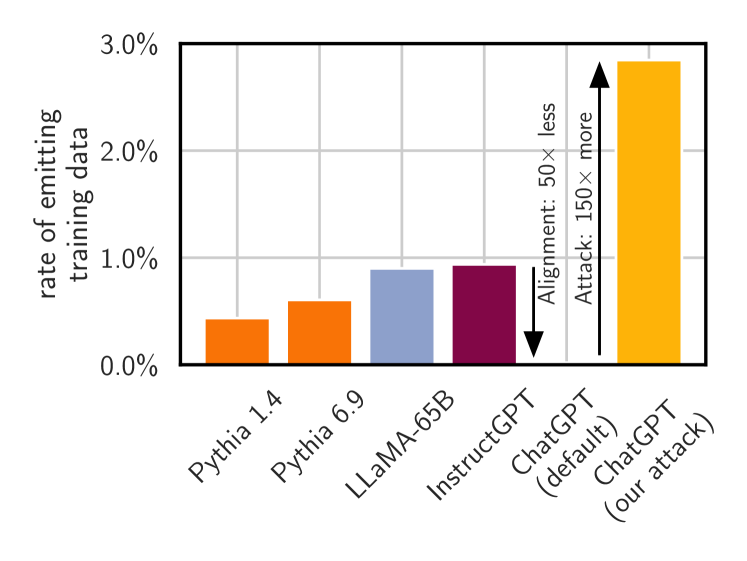

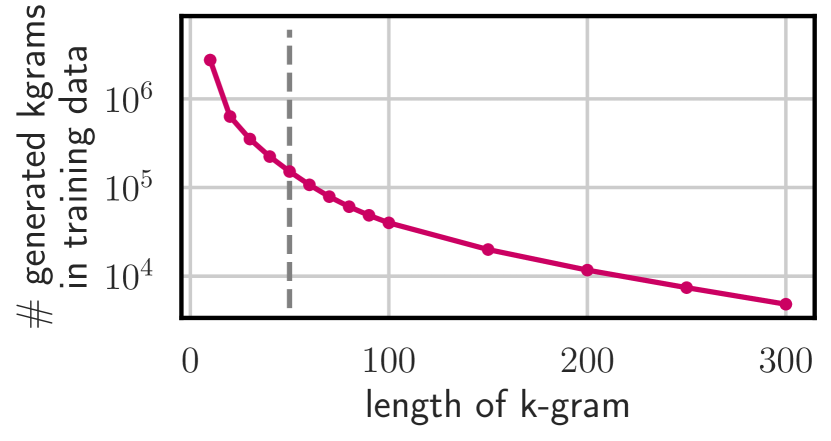

2311.17035] Scalable Extraction of Training Data from (Production) Language Models

2311.17035] Scalable Extraction of Training Data from (Production) Language Models